https://blog.bytebytego.com/i/190438250/new-year-new-metrics-evaluating-ai-search-in-the-agentic-era-sponsored

Delivering live video at global scale is fundamentally different from serving pre-recorded content. While Video on Demand (VOD) allows time for preparation, caching, and optimization, live streaming introduces strict time constraints where every second matters. Netflix, traditionally known for its VOD dominance, engineered a specialized system to meet the demands of real-time broadcasting—capable of delivering live streams to tens of millions of concurrent viewers with minimal delay.

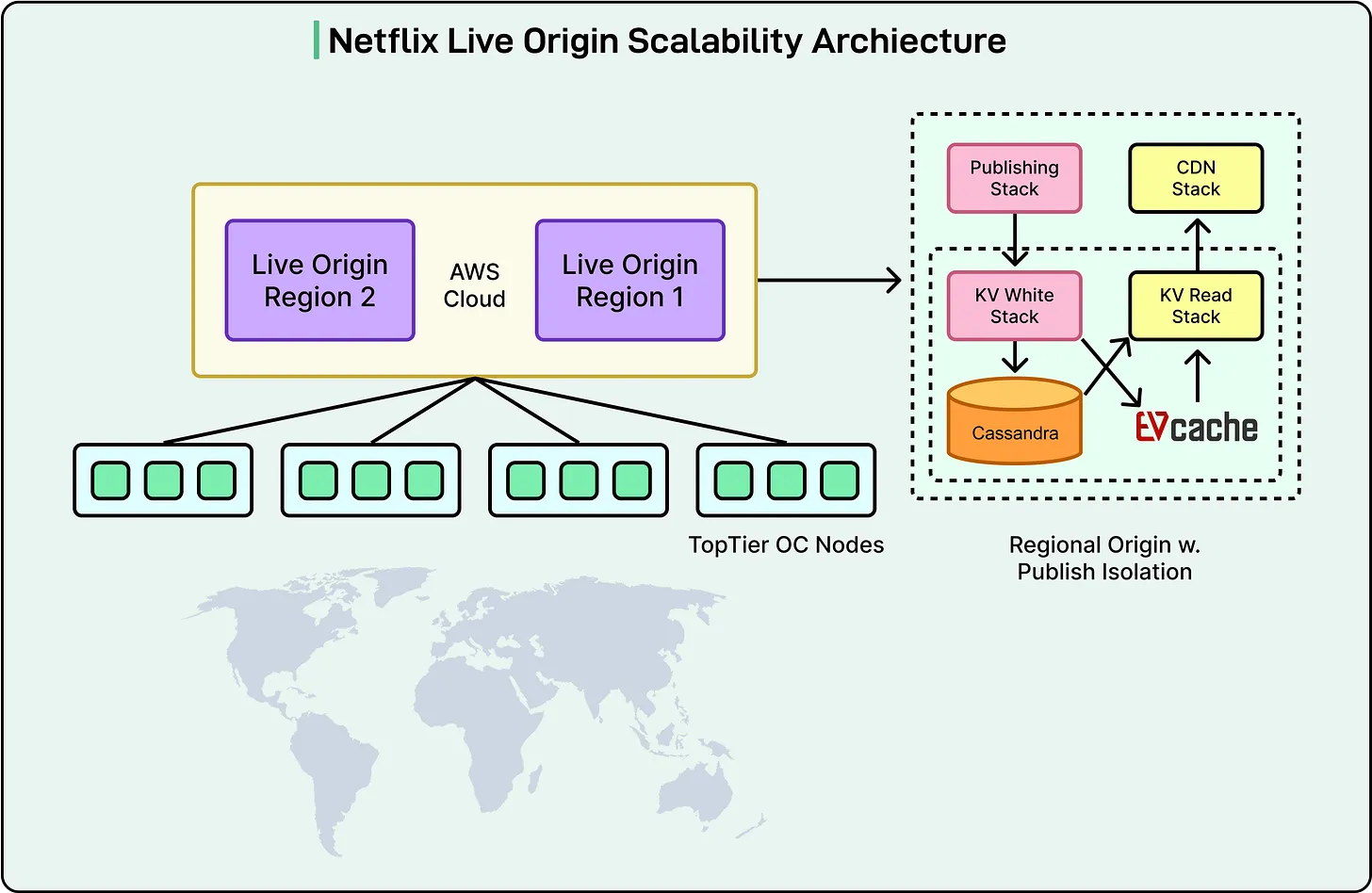

At the center of this system is a component known as the Live Origin, a purpose-built service that acts as the control layer between live video pipelines and Netflix’s global Content Delivery Network (CDN). This article examines how Netflix designed this system to achieve extreme scalability, resilience, and speed.

The Core Problem: Real-Time at Global Scale

In live streaming, content must be captured, encoded, packaged, and distributed almost instantly. Unlike pre-recorded content, there is no buffer for failure or delay. A single disruption in the pipeline can impact millions of viewers simultaneously.

Netflix needed a system that could:

- Process and distribute video segments within seconds

- Scale to tens of millions of concurrent streams

- Maintain reliability despite unpredictable live input

- Optimize delivery across a globally distributed network

The Live Origin was designed specifically to address these constraints.Architecture Overview: A Controlled Gateway

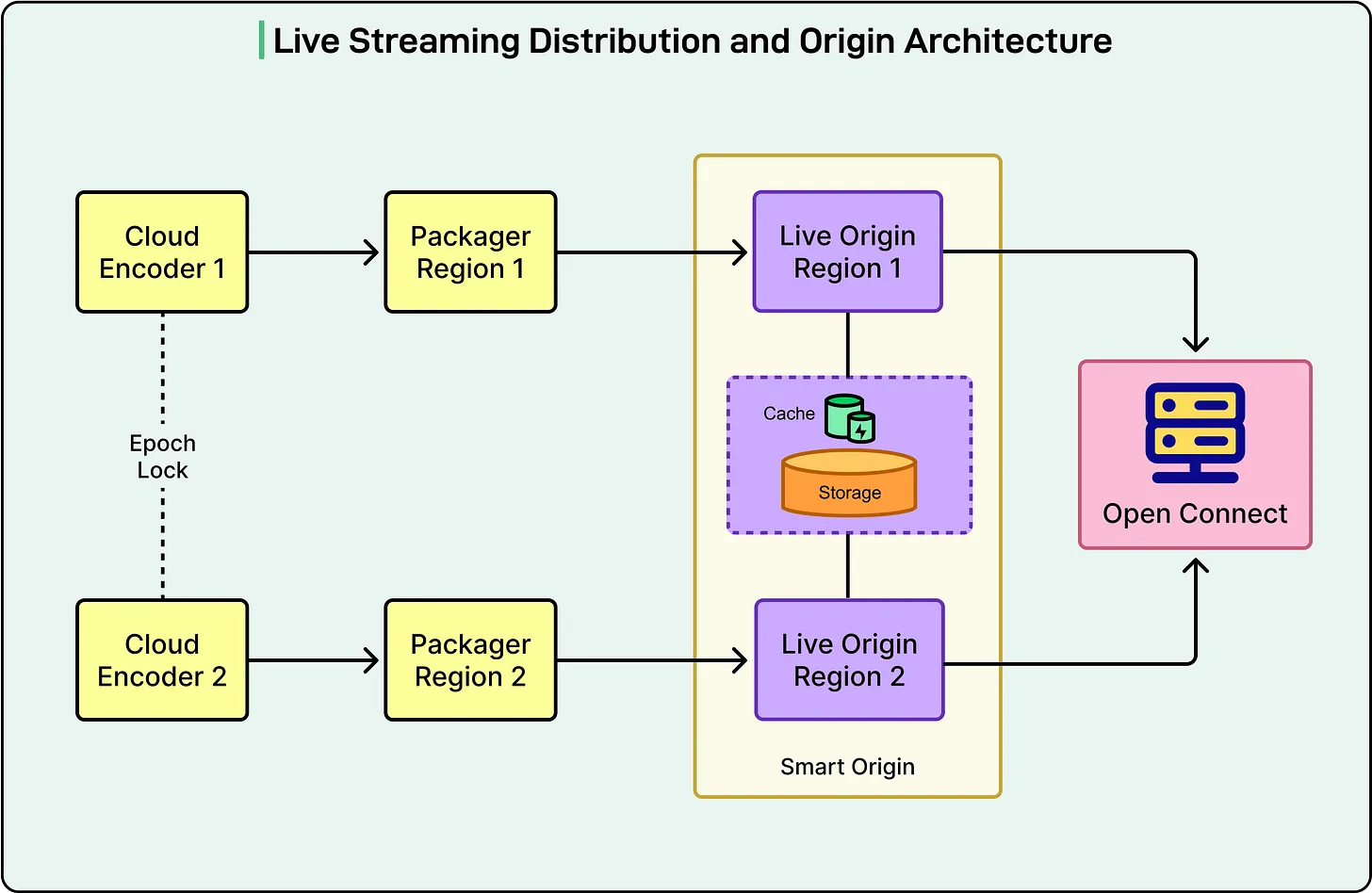

The Live Origin operates as a distributed microservice running on cloud infrastructure. Its role is straightforward but critical: receive processed video segments from upstream systems and make them available to CDN nodes for global distribution.

The workflow follows a simple pattern:

- Video segments are uploaded by packaging systems

- CDN nodes retrieve these segments using the same identifiers

- The Live Origin acts as a validation and distribution checkpoint

This simplicity is intentional. It reduces overhead while enabling precise control over how content flows through the system.

Redundancy Through Dual Pipelines

Live streaming is inherently error-prone. Issues such as missing frames, audio glitches, or timing inconsistencies are common due to the real-time nature of content ingestion.

To mitigate this, Netflix runs two independent streaming pipelines simultaneously. Each pipeline:

- Operates in a separate cloud region

- Uses independent encoders and input sources

- Produces its own version of each video segment

When a segment is requested, the Live Origin evaluates outputs from both pipelines and selects the best available version. This redundancy significantly reduces the likelihood of delivering defective content.

However, this approach is not without cost. Running duplicate pipelines doubles infrastructure requirements and introduces synchronization complexity. The trade-off is improved reliability—critical for large-scale live events.

Predictable Streaming with Segment Templates

Rather than dynamically updating manifests for every new segment, Netflix uses a fixed segment duration model. Each video chunk is typically two seconds long, and segment identifiers follow a predictable pattern.

This design allows the system to:

- Anticipate when segments should be available

- Validate incoming requests efficiently

- Reduce metadata overhead

Predictability is key here. It enables faster decision-making without relying on constant state updates.

Intelligent Segment Selection

The Live Origin does more than store and serve content—it actively evaluates segment quality. When a request arrives:

- It checks segments from both pipelines

- It prioritizes valid, defect-free segments

- It falls back to alternative sources if needed

Defect detection is assisted by metadata provided during packaging, which flags issues such as missing frames or corrupted data. If both pipelines fail (a rare scenario), the system passes this information downstream so client devices can handle playback gracefully.

Optimizing CDN Behavior

Netflix’s CDN, known as Open Connect, was originally optimized for VOD delivery. Live streaming required several adaptations.

Request FilteringCDN nodes can determine whether a requested segment should exist based on timing rules. Requests outside the valid range are rejected immediately, reducing unnecessary load on the origin.

Handling Early RequestsIf a request arrives for a segment that has not yet been published, the system avoids repeated failures by:

- Returning temporary responses with expiration hints

- Holding certain requests open until the segment becomes available

This reduces redundant traffic and improves efficiency at the network edge.

Fine-Grained CachingBecause segments are generated every few seconds, traditional caching mechanisms (which operate at second-level granularity) are insufficient. Netflix introduced millisecond-level caching controls to better align with live streaming timing.

Metadata Delivery via HTTP Headers

In addition to video content, live streams often require real-time metadata—such as event markers, ad breaks, or content notifications.

Netflix distributes this information using HTTP headers attached to video segments. These headers:

- Persist across subsequent segments

- Are cached and updated at CDN nodes

- Ensure all viewers receive consistent event data regardless of playback position

This approach avoids the need for separate metadata channels, simplifying the architecture while maintaining scalability.

Advanced Cache Invalidation and Control

Live events often require rapid updates or corrections. Netflix implemented a flexible invalidation system that allows:

- Clearing cached content by versioning identifiers

- Targeting specific segments or pipeline outputs

- Excluding problematic data during playback

This capability is especially important during high-profile events where errors must be corrected quickly without disrupting the entire stream.

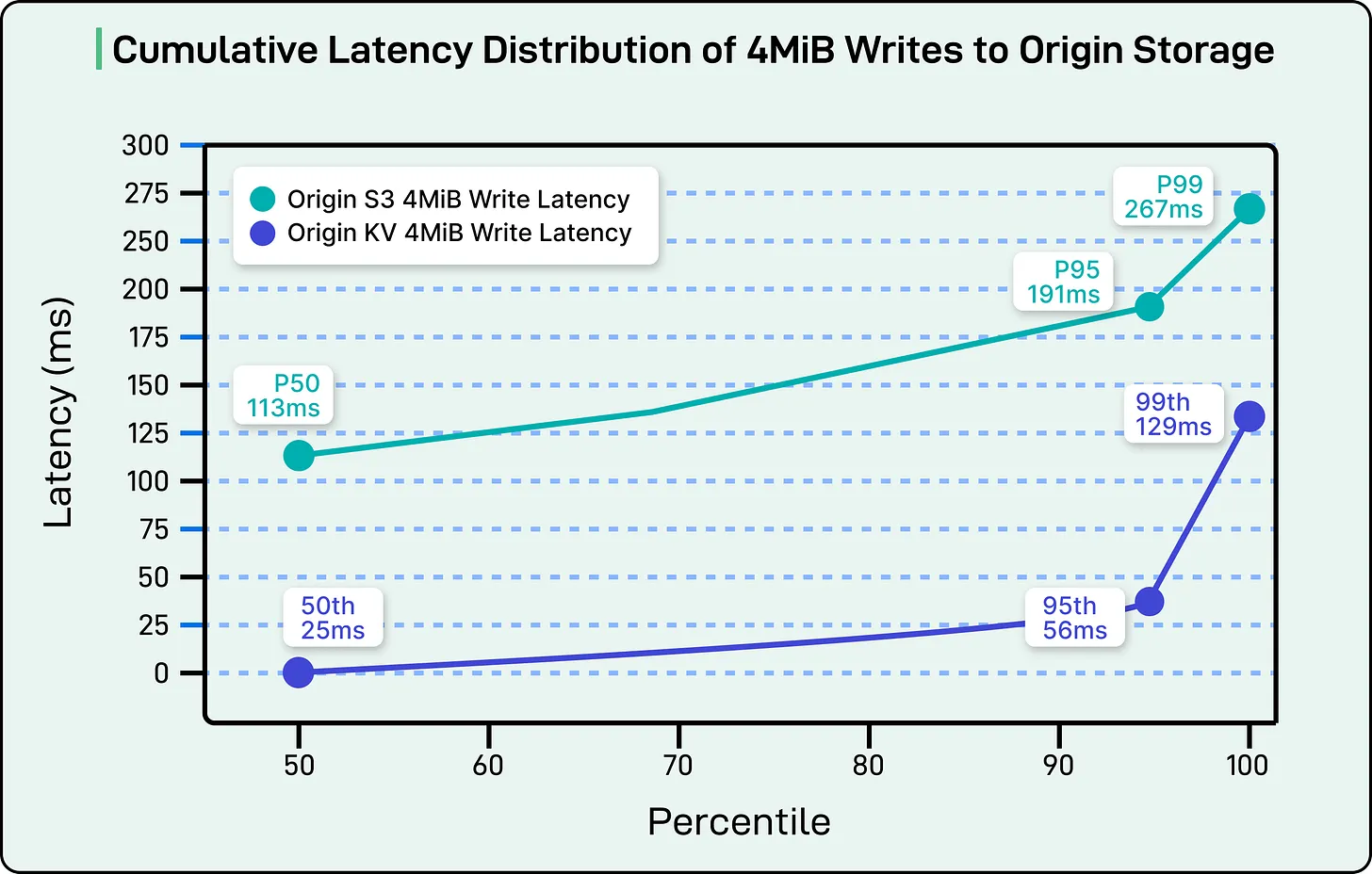

Evolving the Storage Layer

Initially, Netflix relied on cloud object storage for handling live segments. While adequate for smaller workloads, it proved insufficient for high-scale live streaming due to strict latency requirements.

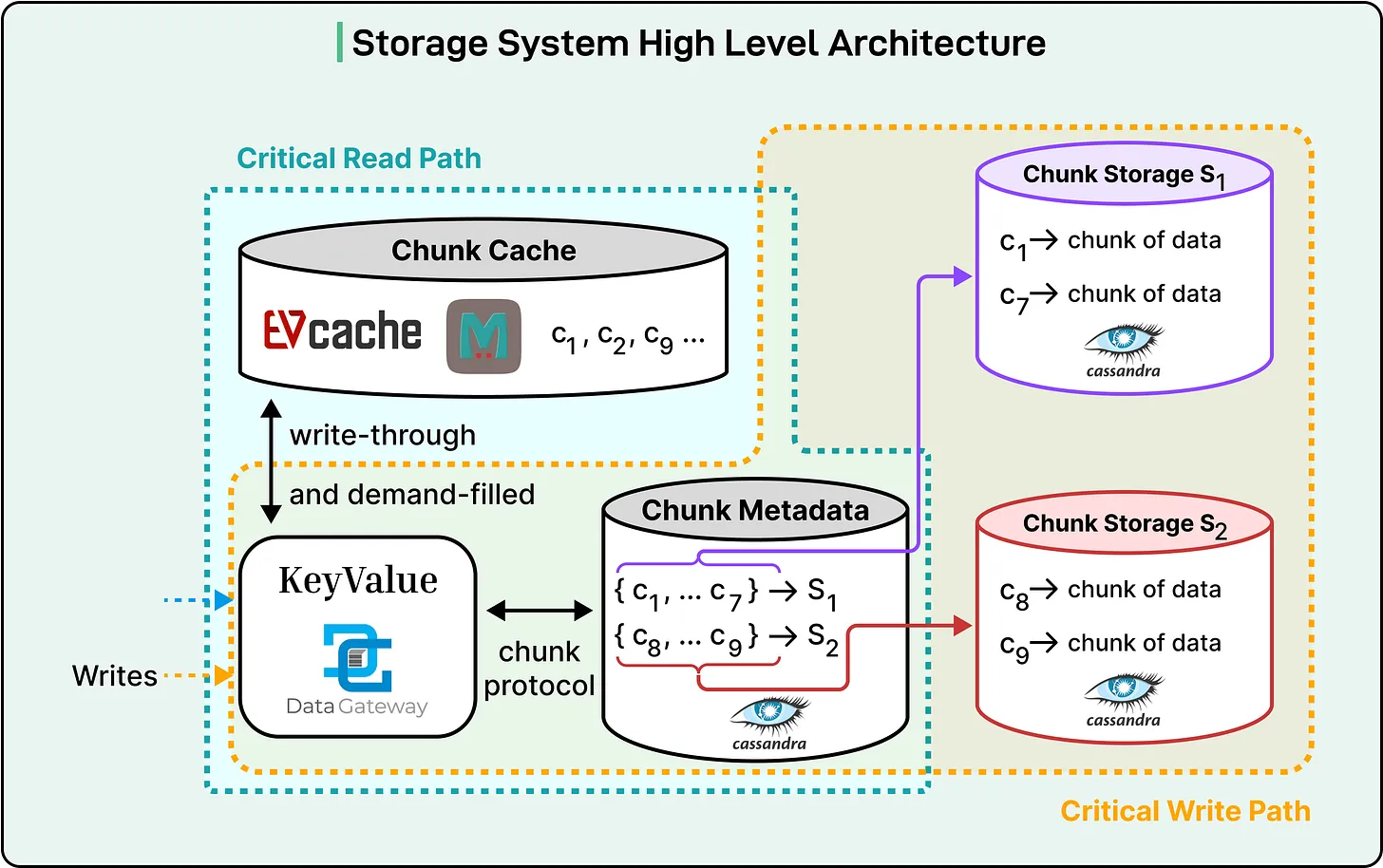

Netflix transitioned to a custom storage architecture built on a distributed key-value system. Key characteristics include:

- High write availability with low latency

- Efficient handling of large data chunks

- Strong consistency within regions

- High throughput for both reads and writes

Large video segments are divided into smaller chunks, enabling retries and improving reliability. This chunking strategy also aligns with the underlying storage engine’s strengths.

To handle extreme read demand, Netflix introduced a distributed caching layer that serves most requests directly from memory. This significantly reduces pressure on the storage backend and allows the system to scale to massive throughput levels.

Managing Traffic at Scale

Live streaming generates diverse traffic patterns, including:

- Real-time playback (most critical)

- Delayed viewing (DVR mode)

- Background or redundant requests

Netflix prioritizes these requests based on their impact:

- Live-edge playback receives highest priority

- DVR and rewind requests are deprioritized during congestion

This prioritization ensures that the majority of users experience uninterrupted streaming, even under heavy load.

Additionally, the system uses rate limiting and temporary caching strategies to absorb traffic spikes. For example, repeated low-priority requests may be temporarily suppressed to stabilize the system.

Handling Invalid Requests Efficiently

Requests for non-existent segments—often caused by timing mismatches—can overwhelm systems if not handled properly.

Netflix addresses this through hierarchical metadata:

- Event-level validation

- Stream-level timing checks

- Segment-level existence verification

Invalid requests are rejected early, often with cacheable responses that prevent repeated attempts. This minimizes unnecessary load on both the origin and storage layers.

Proven Performance at Massive Scale

Netflix’s live streaming architecture has been tested under extreme conditions, including events with tens of millions of concurrent viewers. During peak scenarios, the system has demonstrated its ability to:

- Deliver streams reliably across global regions

- Maintain low latency under heavy load

- Scale dynamically without compromising quality

The design prioritizes reliability and user experience over cost efficiency—a deliberate decision given the stakes of live broadcasting.

Conclusion

Netflix’s Live Origin system represents a highly specialized solution to the challenges of real-time video delivery at global scale. By combining redundant pipelines, intelligent content selection, advanced caching strategies, and a purpose-built storage architecture, Netflix has created a system capable of streaming to millions of devices within seconds.

The key insight is that live streaming performance is not determined by a single component. It emerges from the interaction of multiple layers—encoding pipelines, origin services, CDN behavior, storage systems, and traffic management strategies.

While this level of engineering complexity is justified for Netflix’s scale and requirements, it is not universally applicable. For most organizations, simpler streaming solutions or managed services will provide a better balance between cost and performance.

Netflix’s approach, however, sets a benchmark for what is possible when infrastructure is designed specifically for real-time, large-scale communication.