Visual content production has traditionally depended on equipment, crew coordination, scheduling, and layered post-production workflows. While this structure delivers quality, it also introduces cost, delay, and operational friction. As digital platforms demand higher output at faster intervals, many organizations find their production systems under strain.

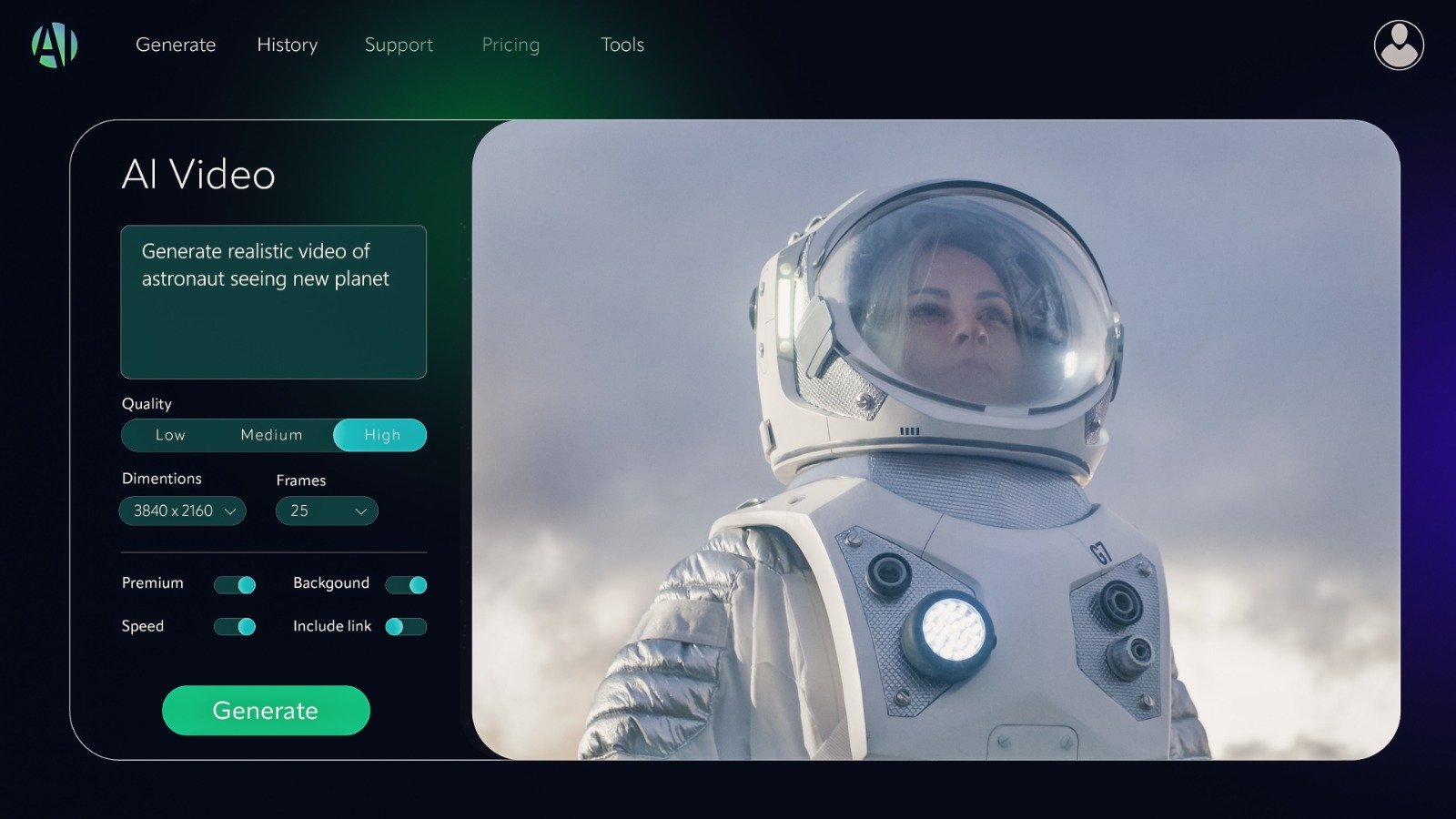

Seedance 2.0 AI Video represents a structural shift in how visual content is conceptualized and produced. By converting natural language prompts into cohesive, cinematic scenes, the platform compresses timelines that once required weeks of coordination. More importantly, it improves reliability in areas where earlier AI video systems struggled.

This is not simply an incremental upgrade in generative capability. It reflects a broader transformation in creative infrastructure.

Meeting the Demand for Faster Output

Modern teams operate under increasing pressure to produce consistent, high-quality visual content across marketing, training, social media, and internal communications. Traditional production pipelines were not designed for this scale or speed.

Seedance 2.0 integrates directly into workflows that already require rapid iteration. Instead of waiting for concept sketches, filming schedules, and editing rounds, teams can generate preview-ready scenes from structured descriptions.

This changes the early stages of creative planning. Concepts can be visualized immediately. Stakeholders evaluate concrete outputs rather than abstract storyboards. Decisions move forward based on visible drafts rather than hypothetical discussions.

By shifting visual exploration upstream, Seedance 2.0 reduces dependency on extended production cycles.

Reliability Beyond Early AI Video Models

Early generative video tools demonstrated potential but often lacked consistency.

Common limitations included:

- Inconsistent lighting across frames

- Character identity drift

- Unnatural motion and weightless movement

- Disconnected environmental logic

These inconsistencies limited commercial viability. While outputs were impressive at first glance, extended scenes often revealed structural weaknesses.

Seedance 2.0 addresses these issues through a unified scene-processing engine. Instead of generating elements independently, the system evaluates lighting, motion, character continuity, and environmental depth as components of a single scene.

The result is greater stability across frames and reduced collapse under extended sequences. Professionals notice this immediately because outputs require fewer corrective edits.

Unified Scene Interpretation

One of the key improvements in Seedance 2.0 is its integrated architectural design. Rather than separating motion modeling, environmental generation, and character rendering into loosely coordinated modules, the platform treats each component as part of one coherent system.

This unified processing approach yields several advantages:

- Lighting remains contextually aligned with scene conditions

- Motion reflects grounded physical logic

- Environmental depth maintains spatial consistency

- Character features persist across frames

When all variables are evaluated simultaneously, the probability of visual mismatch declines significantly. For production teams, this translates into fewer revisions and more dependable first-pass results.

Reducing the Cost of Iteration

Traditional visual experimentation carries tangible cost. New angles, alternate scripts, or stylistic variations often require additional filming sessions or re-edits. As a result, teams sometimes avoid exploring alternatives.

Seedance 2.0 changes this cost equation. Generating multiple scene variations requires minimal additional setup. Teams can refine messaging and tone through prompt adjustments rather than physical reshoots.

This accelerates clarity. Instead of debating theoretical improvements, stakeholders review alternative visual executions directly. Iteration becomes practical rather than expensive.

Organizations gain stronger alignment before committing resources to final production phases.

Compressed Production Timelines

Standard production pipelines rely on several logistical dependencies:

- Equipment availability

- Crew scheduling

- Location preparation

- Post-production editing

- Audio and visual integration

Seedance 2.0 condenses these layers into a prompt-driven workflow. While it does not eliminate the value of traditional production for high-budget campaigns, it dramatically reduces the time required for concept validation, internal content, and digital-first materials.

Teams focus more on refining ideas and less on coordinating logistics. The speed of execution begins to match the pace of digital distribution platforms.

Improved Motion and Physical Realism

Earlier generative video tools struggled with believable motion. Characters appeared weightless, gestures felt disconnected from gravity, and action sequences lacked spatial coherence.

Seedance 2.0 demonstrates measurable improvement in physical logic modeling. Movement reflects pacing, balance, and environmental resistance more accurately. Characters behave within realistic constraints rather than abstract animation rules.

This enhancement expands practical use cases, including:

- Instructional demonstrations

- Narrative storytelling

- Marketing sequences requiring credible interaction

- Internal simulations

Improved motion fidelity increases viewer trust and broadens commercial viability.

Supporting Long-Form and Extended Narratives

Maintaining identity and continuity across extended clips has been a persistent challenge in AI-generated video. Minor inconsistencies compound over time, undermining narrative coherence.

Seedance 2.0 stabilizes longer sequences by preserving identity attributes and environmental consistency throughout a clip.

This allows teams to create:

- Multi-shot training modules

- Branded narrative sequences

- Structured marketing explainers

- Extended visual briefings

Longer-form content becomes feasible without the fragmentation seen in earlier models.

Accessibility Across Diverse Teams

One of Seedance 2.0’s most significant operational advantages is accessibility. Prompt-based generation allows non-technical professionals to visualize ideas without requiring specialized production knowledge.

Strategists can prototype campaign visuals. Analysts can create illustrative explainers. Creatives can iterate independently before involving external production partners.

This reduces bottlenecks and enhances collaboration across departments. Instead of waiting for resource allocation, teams move ideas forward immediately.

Accessibility does not eliminate traditional production roles; rather, it augments early-stage development and accelerates decision cycles.

Shifting Economics of Visual Creation

When high-quality visual generation becomes accessible through natural language prompts, the economics of content production shift. Access to equipment and crew becomes less central to initial content